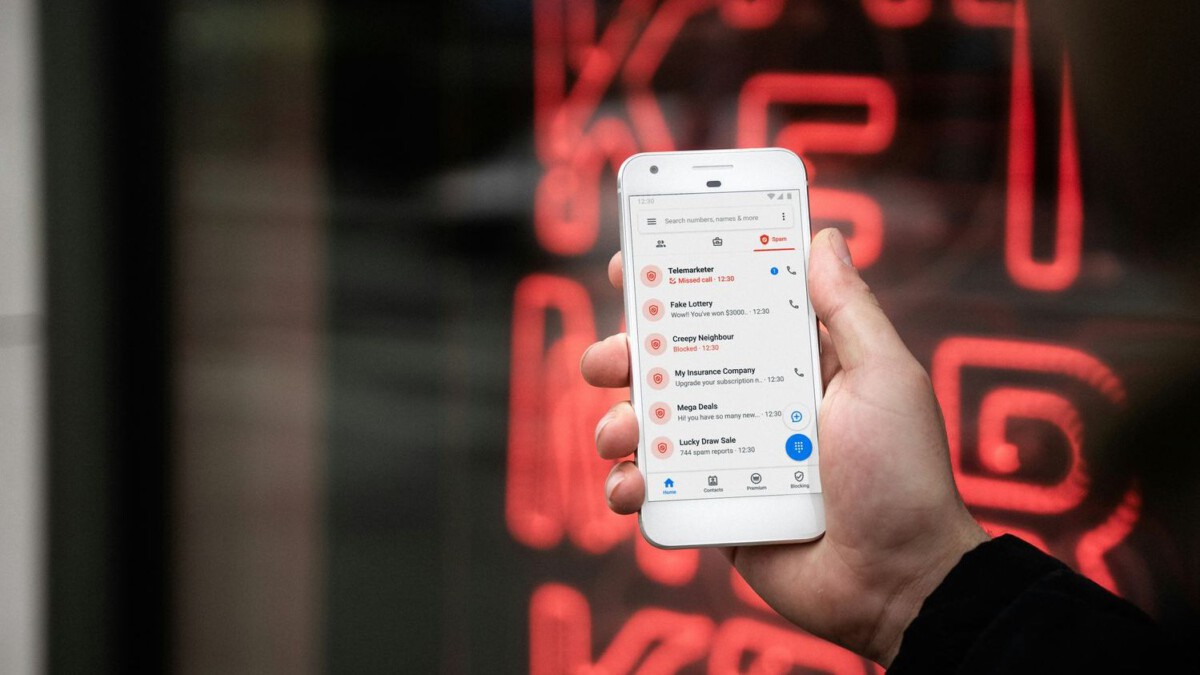

Scammers Are Using AI to Target You – Don’t Get Caught Off Guard – Image for illustrative purposes only (Image credits: Unsplash)

A frantic phone call from a familiar voice claims a loved one faces an emergency, urging immediate wire transfers. Such scenarios have become alarmingly common as scammers deploy artificial intelligence to clone voices and craft deepfake videos, ensnaring everyday Americans in sophisticated fraud schemes. Federal Trade Commission data revealed that consumers reported $12.5 billion in fraud losses in 2025, with AI amplifying the reach and believability of these attacks.[1][2] Financial repercussions extend beyond immediate losses, often leading to prolonged battles with identity theft and credit damage.

AI Fuels a Sharp Rise in Fraud Losses

Scammers harnessed AI tools more aggressively in 2025, contributing to a surge in reported incidents. Voice deepfake attacks alone increased by 680% that year, with over 100,000 cases documented in the United States.[3] Experian forecasted that AI-powered scams would intensify further in 2026, building on the prior year’s $12.5 billion toll.[1]

Consumer Reports highlighted how these technologies enable microtargeting, allowing fraudsters to impersonate banks, the IRS, or relatives with eerie precision. Social media platforms emerged as prime launchpads, where scams originating on sites like Facebook generated $2.1 billion in losses in 2025 alone – an eightfold jump since 2020.[2] Investment pitches and job offers, enhanced by AI-generated testimonials, proved particularly lucrative for criminals.

Voice Cloning: The Deceptive Emergency Call

One of the most insidious tactics involves AI voice cloning, where scammers replicate a family member’s speech from mere seconds of audio gleaned from social media. Victims receive calls depicting a grandchild in jail or a child stranded abroad, demanding urgent funds via untraceable methods like gift cards or cryptocurrency.[4] These schemes prey on emotions, bypassing logical scrutiny.

Deepfake audio has infiltrated business settings too, with fraudsters posing as executives to authorize fake transfers. A single such incident in Hong Kong resulted in massive corporate losses, underscoring the tactic’s potency against individuals and firms alike.[5] Detection grows harder as consumer-grade apps lower the barrier for criminals.

Deepfakes and Phishing Escalate Identity Risks

Beyond audio, AI generates realistic videos and images for romance scams, fake job interviews, and rental frauds. Scammers create personas that build trust over time, then solicit payments for fabricated crises. Phishing evolves with AI-drafted emails that mimic legitimate sources, evading spam filters and incorporating personal details for credibility.[6]

These methods facilitate identity theft by tricking users into revealing sensitive data like Social Security numbers or bank details. Once obtained, stolen identities fuel further crimes, from unauthorized loans to drained accounts. Deloitte projected U.S. generative AI fraud losses could hit $40 billion annually by 2027, a stark 32% compound growth from 2023 levels.[7]

Essential Defenses Against AI-Driven Scams

Protection begins with skepticism toward unsolicited contacts, especially those invoking urgency. Always verify claims independently by calling known numbers from official sources, never responding to provided callbacks. Enabling multi-factor authentication on accounts adds a vital layer, while freezing credit files prevents unauthorized inquiries.[8]

Here are key steps to fortify defenses:

- Audit privacy settings on social media to limit audio and photo access for cloning.

- Hover over links in emails or texts to check destinations before clicking.

- Enroll in free credit monitoring and place fraud alerts with the three major bureaus.

- Use unique, strong passphrases and a password manager.

- Report suspicions promptly to the FTC at ReportFraud.ftc.gov.

Financial institutions increasingly deploy AI countermeasures, but personal vigilance remains crucial. Educating family members, particularly seniors, about these tactics reduces collective vulnerability.

Recovery Paths After a Scam Hits

If targeted, act swiftly to minimize damage. Contact banks to freeze accounts and dispute charges, then file a police report for documentation. The FTC advises placing an identity theft report online, which unlocks free recovery resources.[9]

Though only about 25% of victims recover full funds, early reporting improves odds. Long-term, monitor credit reports weekly via AnnualCreditReport.com and consider identity theft protection services. Scammers adapt quickly, so ongoing awareness protects not just wallets but peace of mind.

As AI tools proliferate, the human cost of these scams – stolen savings, eroded trust, fractured relationships – demands proactive measures from every American. Staying informed equips individuals to reclaim control amid technological threats.